The Rust Gap in Low-Code: What 16 AI Builders Reveal About Metatable

12 of 16 platforms promise faster development. Zero generate production Rust. The positioning landscape has a hole the size of a language.

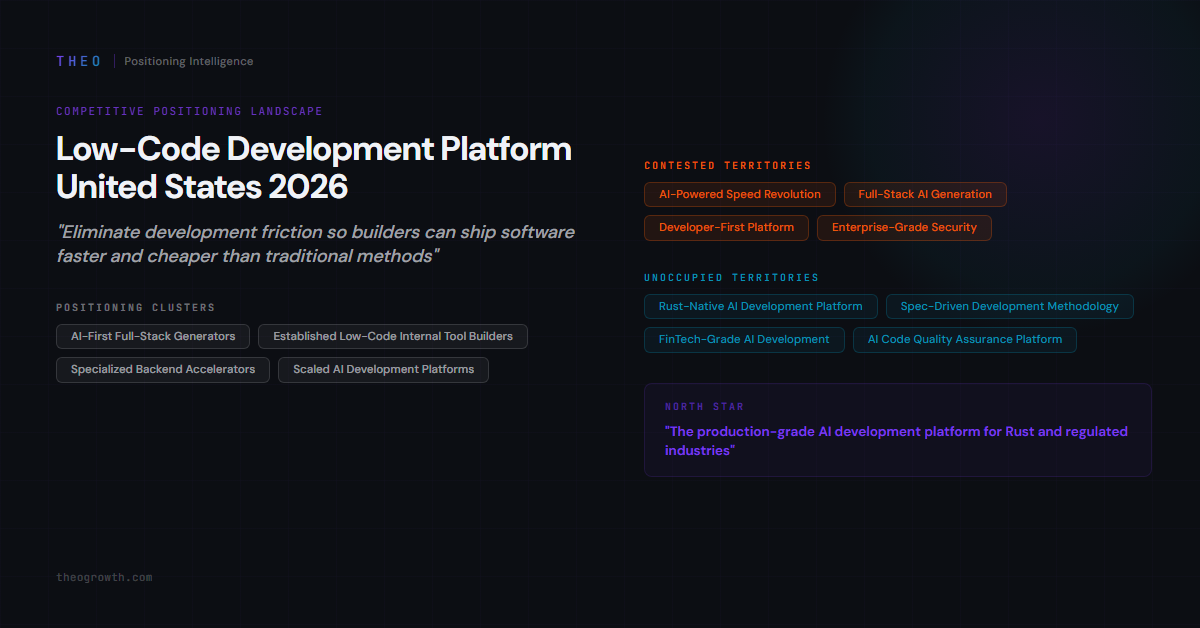

The low-code development platform category in the United States runs on a single thesis: eliminate friction so builders ship faster and cheaper. THEO’s discovery phase identified 112 competitors across this landscape. The 16 highest-priority were analyzed in depth - 5 competing directly for the same positioning territory, 11 targeting adjacent buyers with overlapping claims. The category is converging fast, and almost everyone is converging on the same point.

Twelve Platforms, One Promise

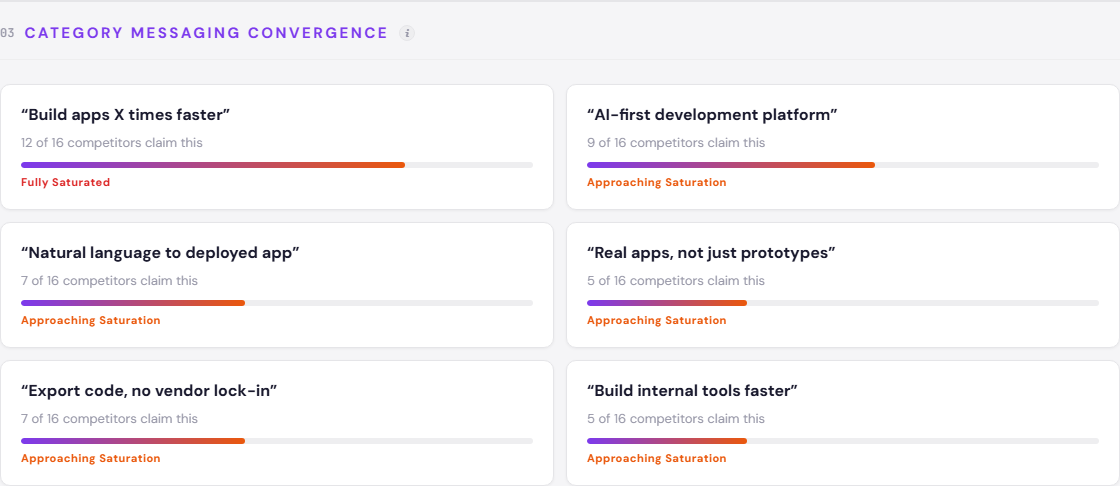

The dominant buyer frame in this market is speed. Faster development. Faster shipping. Faster everything. Among the 16 analyzed platforms, 12 claim some version of “build apps X times faster.” Nine call themselves “AI-first development platforms.” Seven promise natural language to deployed applications.

That is not a competitive landscape. That is a messaging monoculture.

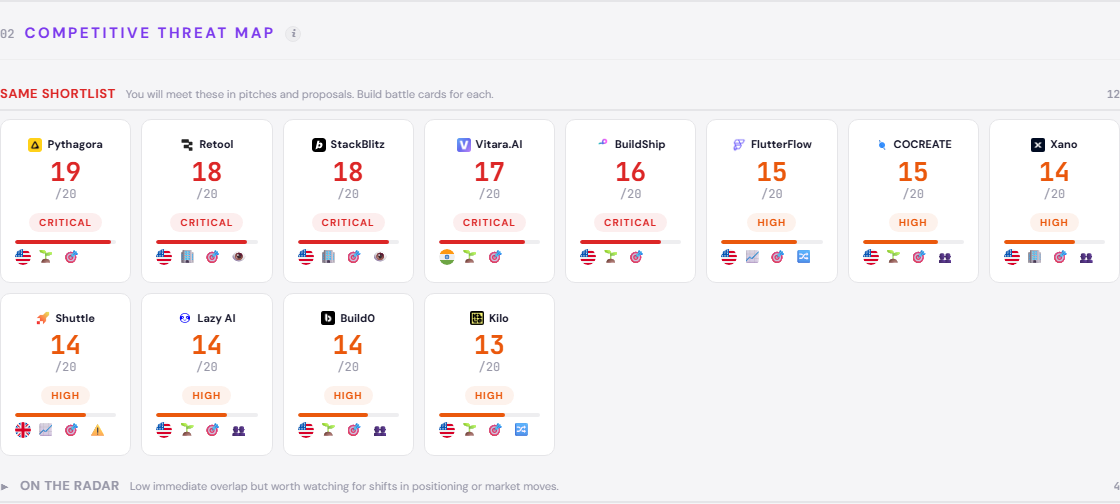

Pythagora sits at the top of the positioning threat index with a score of 19 - the closest structural mirror to Metatable. Same startup stage. Same “AI software engineer” narrative. Same collision course. Retool and StackBlitz both score 18, but they compete asymmetrically - massive user bases, established trust, different buyer segments. Vitara.AI at 17 and BuildShip at 16 round out the five platforms competing directly for the same positioning territory.

Of the 16 analyzed platforms, 12 claim “build apps X times faster.” Seven promise “natural language to deployed app.” Five claim “real apps, not just prototypes.” The messaging overlap is nearly total.

The convergence goes deeper than headlines. Seven of the 16 analyzed competitors claim code export and no vendor lock-in. Five insist their output produces “real apps, not just prototypes” - a defensive claim that reveals how little buyers trust AI-generated code. The entire category is arguing about speed and output quality while assuming those are the only dimensions that matter.

This is what the full interactive positioning landscape maps in detail - every competitor’s threat score, territory claims, and strategic rationale. Explore it here.

The Cluster Metatable Almost Belongs To

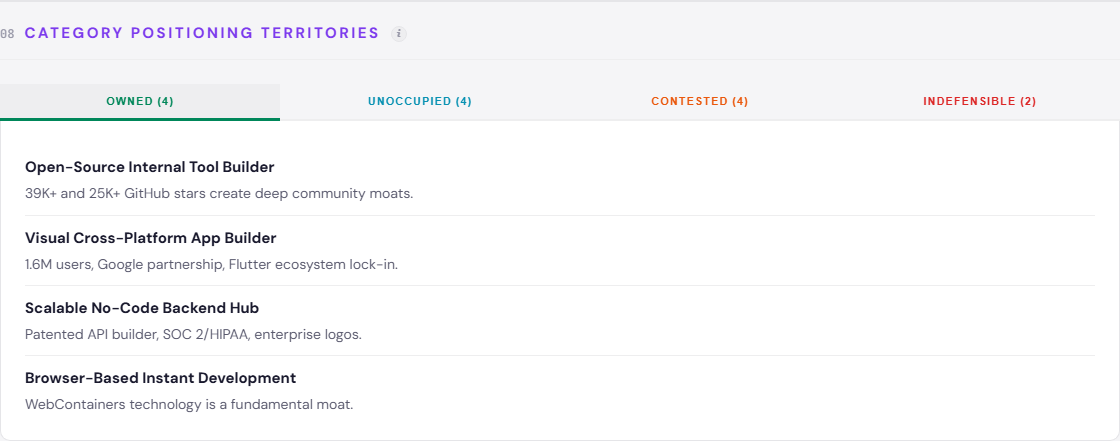

Metatable sits at the edge of the AI-First Full-Stack Generators cluster - a group that includes Pythagora, Vitara.AI, Lazy AI, Build0, and COCREATE. Internal competition within this cluster is HIGH. Every member generates full-stack applications from prompts or specs. Every member targets the same solo developer and small team buyer.

Edge position matters. Metatable is not trapped inside this cluster the way Build0 and COCREATE are - platforms that will spend the next 18 months fighting each other for identical positioning territory. The edge means Metatable has one foot in the cluster and one foot somewhere else. The question is where that other foot lands.

Three assumption gaps in this landscape have HIGH client fit for Metatable: developer team collaboration and knowledge transfer, AI-generated code quality assurance and auditability, and regulatory compliance as a built-in platform capability. No analyzed competitor addresses any of them. These are not incremental improvements. They are entire dimensions the category has decided not to think about.

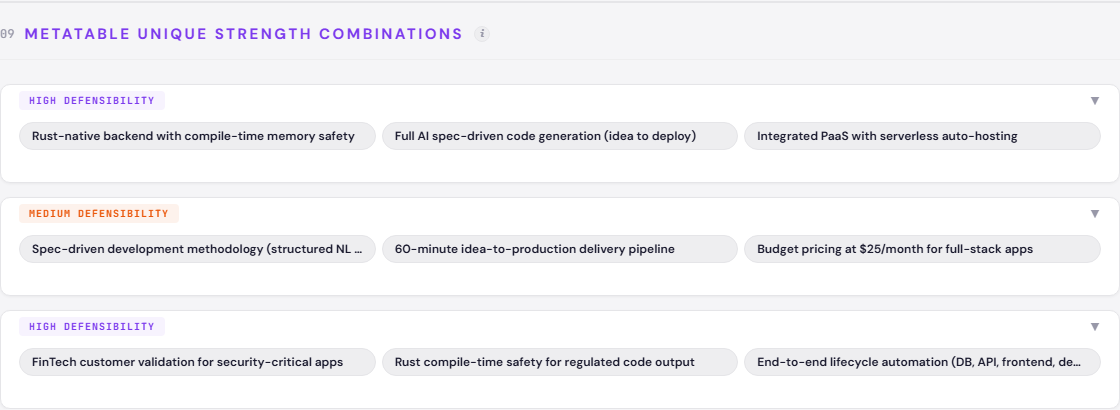

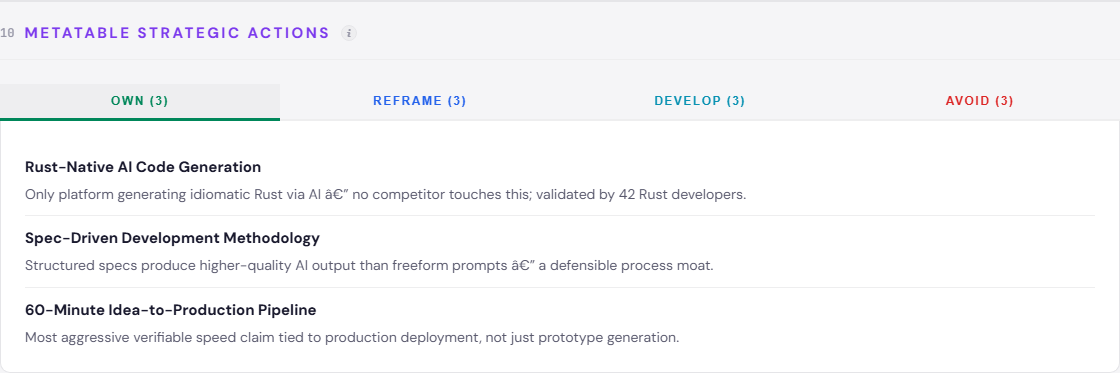

Metatable’s most defensible combination is specific: Rust-native backend generation, full AI spec-driven code generation, and integrated PaaS. No other analyzed platform combines these three. The second combination - spec-driven methodology, 60-minute delivery, and $25/month pricing - is defensible but less structurally unique. The third - FinTech validation, Rust compile-time safety, and end-to-end lifecycle automation - is where the real moat could form, if the validation is real.

What Happens When Bolt Wins

The AI-First Full-Stack Generators cluster is not the only competitive front. The Established Low-Code Internal Tool Builders - Retool, Appsmith, ToolJet, UI Bakery - operate with HIGH internal competition but in a structurally different market. They build internal tools. Metatable builds external applications. The collision happens when Retool decides internal tools are not enough.

A more immediate structural tension: the fork between open-source core and closed-source SaaS. Metatable sits on the closed-source side. Appsmith, ToolJet, and Kilo champion open-source transparency as the foundation for AI-powered development trust. This is not a fringe position. Developer tool discovery increasingly runs through open-source communities. A closed-source AI code generator has to earn trust through output quality alone - there is no “inspect the source” shortcut.

The category is also splitting between visual builders and spec/prompt-driven generation. Metatable is on the spec/prompt side. FlutterFlow and the visual builders occupy the other. This fork matters because it determines the buyer: visual builders attract non-developers, spec-driven platforms attract developers who want to move faster. Different buyers, different trust signals, different growth channels.

The risk that should keep Metatable’s team awake: StackBlitz’s Bolt achieving winner-take-most dynamics. Bolt already operates at scale. It already owns the “Browser-Based Instant Development” territory. If Bolt becomes the default entry point for AI-assisted development - the way Figma became the default for design - Metatable gets categorized as “a smaller Bolt alternative.” That framing is a death sentence for a startup.

An emerging category frame complicates this further. “Vibe coding” - software creation as creative expression, not engineering - is NASCENT, championed by Vitara.AI and Lazy AI. If this frame gains traction, it reshapes buyer expectations entirely. Builders stop evaluating code quality and start evaluating creative output. Metatable’s Rust-native precision becomes irrelevant in a market that values vibes over verification.

The Territory Nobody Wants

Four positioning territories sit unoccupied among the 16 analyzed platforms: Rust-Native AI Development Platform, Spec-Driven Development Methodology, FinTech-Grade AI Development, and AI Code Quality Assurance Platform.

That last one is telling.

Twelve platforms promise speed. Seven promise code export. Five promise production-ready output. Not one has claimed the territory of proving that AI-generated code is actually good. The entire category assumes output quality is a feature, not a positioning territory. Metatable’s north star - moving from “another AI app builder” to “the production-grade AI development platform for Rust and regulated industries” - targets exactly this gap.

Three pillars define the path: Rust-Native Intelligence (the only AI platform generating idiomatic, memory-safe Rust for production), Spec-to-Ship in 60 Minutes (specs become deployed applications faster than any competitor), and FinTech-Proven Security (compile-time safety validated in regulated environments). The first pillar is the most urgent. It is the only one no competitor can replicate without rebuilding their entire code generation stack.

The reframes matter as much as the claims. “Open-source transparency builds trust” becomes “compile-time safety beats inspectable code.” “Prompt-to-app in seconds” becomes “spec-to-production in 60 minutes.” “Massive user base proves quality” becomes “FinTech validation proves production trust.” Each reframe shifts the evaluation criteria away from where Retool and Bolt are strongest.

What Metatable must build: SOC 2 Type II certification is the highest-priority development action. The FinTech-grade security narrative collapses without formal certification - it becomes a claim indistinguishable from the 12 other speed claims in this landscape. Team collaboration on spec projects is medium priority but addresses one of the three assumption gaps no competitor has touched.

What to avoid: “Generic AI App Builder” and “10x Faster Development.” Both are indefensible territories. The first puts Metatable in a category with 15 other platforms. The second is a claim 12 of them already make. Neither creates separation. Neither survives contact with a buyer who has seen three demos that morning.

What to watch: AI-native capabilities becoming table stakes within 12 months. The incumbents - Retool, StackBlitz, FlutterFlow - have larger user bases and established trust. When they close the AI capability gap, the only defensible positions will be the ones built on dimensions they cannot easily replicate. Rust-native generation is one. Regulated-industry validation is another. Speed is not.

The Bottom Line

The low-code AI platform category has a structural blind spot: nobody is building for the developer who needs their AI-generated code to pass a compliance audit. Metatable’s Rust-native stack is the only analyzed platform that could credibly fill that gap - but credibility requires certification, not claims. The window is open. The question is whether Metatable builds the proof before Bolt builds the habit.

Explore the full Low-Code Development Platform positioning landscape for Metatable - interactive competitor analysis, territory maps, and strategic actions: available here.

The positioning threat index is a 0-20 composite score measuring four dimensions: how far a competitor’s ecosystem reaches into the client’s market (Ecosystem Reach), how much their messaging overlaps with the client’s claims (Messaging Convergence), how directly they compete for the same buyer segments and territories (Category Overlap), and whether their trajectory is accelerating toward the client’s position (Trajectory Threat). Each dimension is weighted and scored from competitor intelligence data. A score above 15 is classified Critical.

Client fit is assessed on three criteria: whether the brand already has operational capabilities to credibly claim the territory, whether the territory aligns with the brand’s current market position and buyer expectations, and whether competitive pressure makes the territory strategically urgent. HIGH means all three criteria are met.

All competitor data is sourced from publicly available information: company websites, published pricing pages, press releases, news coverage, and credible third-party sources (Crunchbase, industry analyst reports, LinkedIn company profiles). No proprietary or confidential data was used.

This analysis contains three layers: observed facts (what competitors publicly communicate and claim), analytical interpretation (how we classify and score those observations using our framework), and strategic recommendations (what we suggest the focus brand should do). Scores, cluster assignments, and territory classifications are our analytical interpretation of public data - not statements of objective market truth. Strategic actions (OWN, REFRAME, DEVELOP, AVOID) are recommendations, not predictions.

This analysis was produced using THEO Growth - positioning intelligence platform. If you work with brands and need competitive positioning analysis, see how it works.